Definition: Cognitive Automation

Cognitive automation is the combination of AI capabilities - including natural language processing, machine learning, and computer vision - with automation infrastructure to handle business tasks that involve unstructured data, exceptions, and judgment calls.

Core characteristics of cognitive automation

Cognitive automation systems go beyond executing predefined rules. They interpret context, learn from outcomes, and handle inputs that vary in format, language, or content in ways no fixed script can anticipate.

- Unstructured input handling: emails, documents, images, voice

- Exception management without human escalation for routine cases

- Contextual reasoning across multiple data sources

- Continuous improvement from feedback and correction signals

Cognitive automation vs. RPA

Robotic process automation follows deterministic scripts - the same input always produces the same output by design. It breaks when inputs deviate from the expected format. Cognitive automation adds an AI layer that reads and interprets the input before deciding what to do with it. The practical difference is the exception rate: RPA achieves near-100 percent automation for well-structured, predictable tasks but struggles with the 15 to 40 percent of cases in any real workflow that do not fit the template. Cognitive automation handles those cases, either by resolving them autonomously or by intelligently pre-processing them for a human.

Importance of cognitive automation in enterprise AI

Most enterprise processes contain a mix of routine mechanical steps and judgment-intensive exceptions. RPA addresses the mechanical steps; cognitive automation addresses the exceptions. Gartner projects that organisations applying intelligent automation will achieve 30 percent faster decision-making and 20 percent higher operational efficiency by 2026. For mid-sized companies, the business case is often straightforward: calculate how much time teams spend handling exceptions in an otherwise automated process - that is the value cognitive automation recovers.

Methods and procedures for cognitive automation

Three deployment patterns account for the majority of enterprise cognitive automation implementations.

Augmented RPA with AI pre-processing

The most common entry point. An existing RPA bot handles structured cases; an AI layer pre-processes inputs before they reach the bot. Unstructured emails are classified and key data extracted before the bot reads them. Documents are parsed and normalised before entering an ERP workflow. This pattern adds cognitive capability to existing automation without replacing it.

- AI classifies incoming items and extracts structured fields

- Validated structured data passed to existing RPA or ERP automation

- Only genuinely ambiguous or novel cases escalated to human review

- Exception rate for the RPA layer drops from 20-40 percent to under 5 percent

End-to-end AI agents

For workflows with high exception rates or inherently unstructured inputs, a full AI agent replaces the bot. The agent reads the raw input, determines what action is needed, executes it across connected systems, and logs the reasoning. This pattern is common in customer service triage, supplier query handling, and compliance document review. Intelligent document processing often serves as the ingestion layer, transforming raw inputs into structured data the agent can act on.

Human-assisted cognitive loops

For high-stakes or regulated decisions, cognitive automation generates a structured recommendation that a human reviews and approves. The AI does the cognitive heavy lifting - reading, extracting, reasoning - and the human provides the sign-off. This is the human-in-the-loop design applied to judgment-intensive workflows and remains the standard for processes where full autonomy is not permissible.

Important KPIs for cognitive automation

Measuring cognitive automation requires metrics that capture both automation depth and decision quality.

Operational KPIs

- Exception rate: percentage of items requiring human intervention (target under 10 percent for mature deployments)

- Straight-through processing rate: items resolved end-to-end without human touch

- Average handling time per item: including AI processing and any residual human steps

- Queue size and aging: backlog of unprocessed items, monitored in real time

Financial and strategic KPIs

The most direct financial metric is cost per transaction - total workflow cost divided by volume, measured before and after deployment. Gartner benchmarks 20 percent higher operational efficiency for well-executed intelligent automation programmes. For mid-sized companies, the practical framing is full-time equivalent hours recovered per quarter, converted to headcount capacity available for higher-value work.

Quality KPIs

Decision accuracy on AI-resolved cases versus human-resolved cases is the critical quality check. A deployment that achieves 90 percent automation rate but carries a 15 percent error rate on automated decisions produces negative net value. Accuracy should be measured at launch and retested quarterly - real-world input patterns drift over time and require model recalibration to maintain performance.

Risk factors and controls for cognitive automation

Cognitive automation introduces failure modes that do not exist in pure rule-based RPA.

Silent errors in AI judgment

Unlike an RPA bot that crashes visibly when it encounters an unexpected input, a cognitive automation system may produce a wrong but confident-looking output. Downstream systems act on the incorrect data before anyone notices. Controls include output sampling, downstream sanity checks, and exception queues for low-confidence decisions.

- Define confidence thresholds below which items are routed to human review

- Monitor output distribution for anomalies (sudden spikes in one decision category)

- Run blind accuracy audits: have humans re-evaluate a sample of AI decisions monthly

Scope creep and automation debt

Teams that start with a narrow cognitive automation deployment often expand scope without adjusting governance. Each new document type, exception category, or connected system adds complexity. Without structured workflow automation governance, the deployment accumulates fragile edge-case logic that is difficult to debug or improve.

Over-automation of regulated decisions

In financial services, healthcare, and HR, certain decisions must have a documented human in the decision chain. Automating these fully violates regulatory requirements regardless of AI accuracy. Map regulatory requirements before deployment and hardcode human-in-the-loop checkpoints for decisions that cannot be delegated to AI under applicable law.

Practical example

A mid-sized German logistics company with 400 employees processed roughly 1,800 incoming shipment exception emails per month - damaged goods notices, customs queries, and delivery discrepancies - through a shared inbox reviewed by three coordinators. Resolution required reading the email, identifying the shipment, checking the ERP status, and either auto-resolving or escalating to the relevant department. The team deployed a cognitive automation agent that reads each email, classifies the exception type, pulls ERP status, and resolves routine cases autonomously while routing complex ones with a structured summary.

- 71 percent of incoming exceptions resolved autonomously within 4 minutes

- Average human handling time for escalated cases reduced from 22 minutes to 7 minutes

- Coordinator capacity freed from inbox triage, redeployed to carrier negotiation

- Zero backlog exceeding 2 hours, down from a daily average backlog of 340 items

Current developments and effects

Cognitive automation is converging with broader hyperautomation and agentic AI trends, changing how enterprises think about automation architecture.

Agentic cognitive automation

The distinction between a cognitive automation deployment and an AI agent is narrowing. Modern deployments use multi-agent systems where specialist models handle classification, extraction, and decision-making in parallel, orchestrated by a controller agent. This architecture handles significantly higher complexity than single-model cognitive automation and is becoming the default for new enterprise deployments.

Multimodal inputs as standard

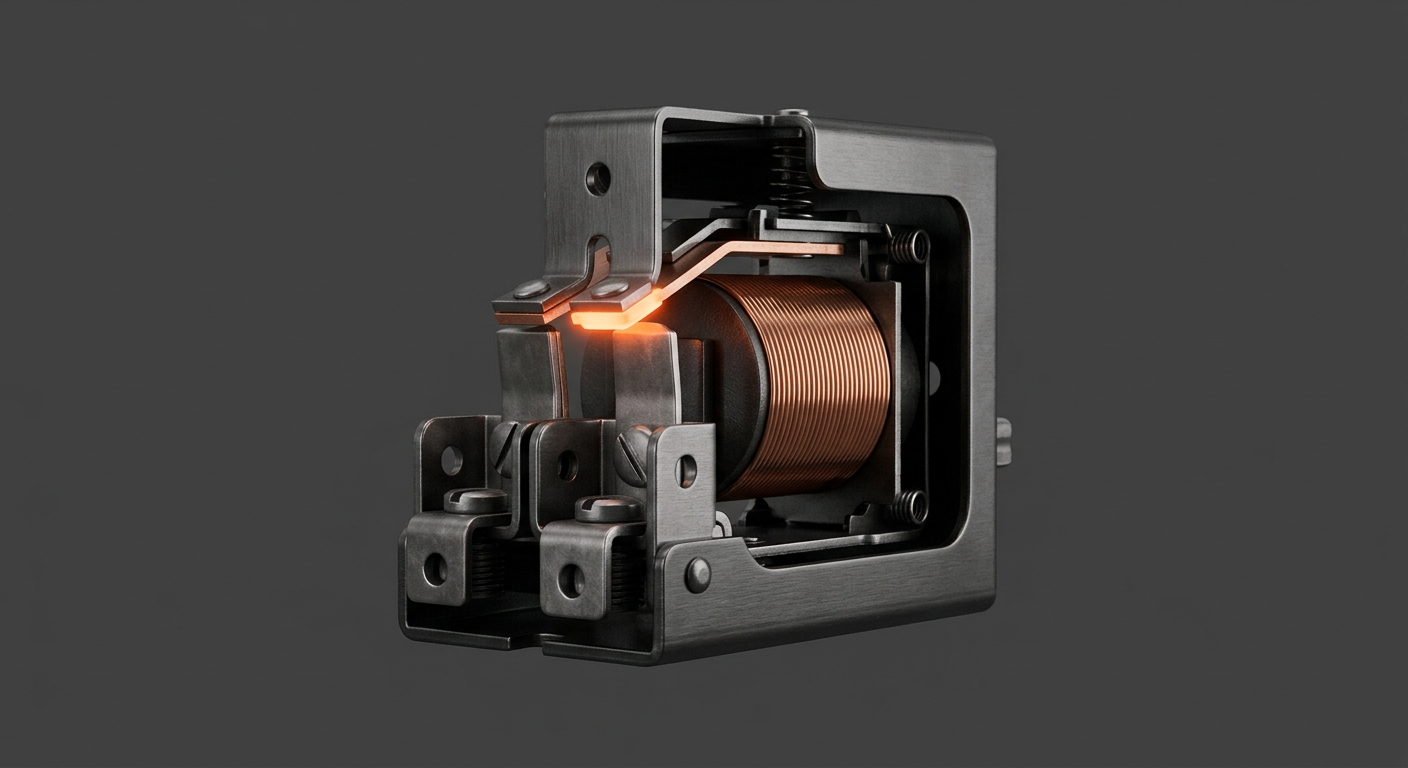

Early cognitive automation systems processed text. Current systems handle scanned documents, photos, voice recordings, and structured data simultaneously in a single pipeline. For manufacturing and logistics, this means camera feeds from production lines or warehouses can feed directly into cognitive automation workflows.

Process mining as input to cognitive design

Organisations increasingly use process mining to identify exactly where human judgment intervenes in otherwise automated flows. These intervention points are the prioritisation input for cognitive automation investment - replacing the informal “where does the team spend time on exceptions” conversation with logged, measurable data.

Conclusion

Cognitive automation fills the gap that rule-based RPA cannot cross: the judgment-intensive, exception-heavy, unstructured portion of enterprise workflows that digital workers can now handle autonomously. For mid-sized companies, the practical starting point is identifying the highest-volume exception pattern in an existing automated or semi-automated process and deploying AI pre-processing there first. The productivity return is measurable within weeks; the architectural foundation it builds - connecting AI reasoning to existing automation infrastructure - compounds as more use cases are added.

Frequently Asked Questions

What is the difference between cognitive automation and RPA?

RPA executes fixed scripts on structured, predictable inputs. It cannot handle variation, ambiguity, or unstructured data. Cognitive automation adds AI capabilities - natural language understanding, computer vision, reasoning - so the system can read and interpret inputs before acting. In practice, cognitive automation handles the 15 to 40 percent of real-world cases where RPA would normally break and require human intervention.

Do we need to replace our existing RPA bots to add cognitive automation?

No. The most common deployment pattern is augmenting existing bots with an AI pre-processing layer. The AI handles classification and extraction of unstructured inputs; the validated structured data is passed to the existing bot. Exception rates for the bot drop dramatically, and the transition requires no changes to the bot’s core logic.

Which business processes are best suited to cognitive automation?

Processes that combine high volume with significant exception rates are the best fit: supplier invoice processing with non-standard formats, customer email triage, order management with missing or incorrect data, compliance document review, and logistics exception handling. The deciding factor is the exception rate in the current process - if it is above 15 percent and driven by input variation rather than policy ambiguity, cognitive automation is likely a strong fit.

How long does a cognitive automation project take for a mid-sized company?

A focused deployment targeting one exception type in one process typically takes 6 to 12 weeks from kickoff to production. The main variables are data readiness (how well existing inputs are stored and accessible) and integration complexity with the target ERP or workflow system. Broad multi-process deployments run 4 to 9 months.

Is cognitive automation the same as hyperautomation?

Hyperautomation is a strategic initiative that combines multiple automation technologies - RPA, AI, process mining, BPM - across an organisation. Cognitive automation is a capability within that stack: the AI layer that handles judgment and unstructured inputs. A hyperautomation programme typically includes cognitive automation as one component alongside rule-based RPA and workflow orchestration.

What governance does cognitive automation require beyond standard RPA?

Cognitive automation requires additional controls that RPA does not: confidence thresholds with automatic human escalation, output sampling for accuracy auditing, model recalibration schedules, and documentation of which decisions the AI is and is not permitted to make autonomously. These controls should be defined before deployment and reviewed at least quarterly.